A chatbot creates a unique user experience with many benefits. It gives the audience an opportunity to ask questions and get to know more about your organization. It allows you to collect valuable information from the audience. It can increase interaction time on your site.

In the spring of 2017, our Knight Lab team examined the conversational user interface of Public Good Software’s chatbot, which is a chat-widget embedded within media partner sites. Public Good’s goal is to turn important news into meaningful change. It uses its data base, machine learning and artificial intelligence to suggest actions users can take on the causes, campaigns and organizations they care about most. This interaction begins with a chatbot that can be found after reading an article.

After 3 months of research, prototyping and testing, we wrote this best practice guide to inform others building their first chatbots.

Recommendations

Start with these questions

Who is this bot for?

Before you dive into building a bot, you need to understand how the equivalent task typically occurs in offline environments and your user’s typical attitude towards the task. This will inform you on how your bot might be able to improve upon the existing interaction and the tone and content of your bot. Since our user testing focused on Northwestern students, we conducted six interviews on students’ attitudes towards volunteering.

Why a bot?

Chatbots are ideal in settings when a conversational, friendly tone enhances the experience. However, they can create unnecessary frustrations when used without a strong reason. For example, if we were trying to share this large amount of information with you in a chatbot format, you might be feeling annoyed and frustrated right now.

Public Good Software (or PGS, for short) decided a bot would be a good fit knowing that volunteering and positive relationships with nonprofits are often built upon a human to human interaction. Thus, a bot could be more personable and build more trust than a different online experience.

What does it do?

Have a sense of what you want the user to accomplish through their interaction with the bot. Think through both the overall goal of a successful interaction, as well as smaller questions you might want answered throughout the experience.

With our bot, collecting an email to follow up with nonprofits the audience might be interested in was our metric for success. However, we also wanted to collect a user’s location if they want to make impact locally and know a user’s skills if they were interested in skills-based volunteering. In addition, we set out to include the social aspect of volunteering in our conversation and be as transparent about the impact and commitment of each nonprofit as possible.

Writing the Scripts

Establish credibility as soon as possible

Just by reading the opening line of the conversation, the audience should be able to know “who” they are talking with. If possible, leverage existing brand knowledge. Since Public Good is a bridge between nonprofits and media organizations, there are multiple brands which might be used to provide context and credibility. We conducted a small survey to test out “who” the bot should represent in order to gain the most trust from users.

During the survey, we prompted testers with a Chicago Tribune article about Chance the Rapper supporting Chicago Public Schools. Once the testers were primed, we had them select one of four conversation starters with our chatbot.

-

Option A (50% of participants chose this option) started the bot conversation with introducing the Tribune again.

-

Option B (13.6%) continues the conversation of Chicago public education, and introduced Public Good.

-

Option C (22.7%) introduces an educational nonprofit.

-

Option D (13.6%) asks the audience if they want to take an action.

Half of our 22 participants prefered A, with a fairly even spread amongst the other three options. Chicago Tribune’s brand gave the bot more credibility and allowed the audience to take a shortcut in their mind by choosing a brand that they already know of. On the other hand, since Public Good offered no brand recognition, it actually deterred a few users because they did not know who they were interacting with. Users also felt confused by Option D because it lacked context.

Be straightforward

Transparency is key. Make it clear what people can do with your chatbot as early as possible. Users should know what they are going to spend time with and what results they will achieve in the first one or two steps of interaction. If you need a specific type of response from your user, make sure that request is made explicitly and simply.

In another survey that began with the same Chicago Tribune article, we learned that people want to know what they can gain from an interaction with the bot. It’s great that our bot could sift through nonprofits by location, but they wanted to know how the bot could help them interact with those organizations first and foremost.

-

Option A (44.4% of participants chose this option) Next steps based on end results

-

Option B (11.1%) Next step based on location

-

Option C (22.2%) Next step based on nonprofit recommendations

-

Option D (22.2%) Next step based on giving more articles to read

Validate the user’s responses frequently

Validation assures users that the bot is working correctly, building the trust necessary to keep people engaged with your bot. Confirmations make the user feel listened to. Also, from a technical perspective, a confirmation step helps you link the response to your existing data. For example, after we ask the user to input their interest, we will send a confirmation message to match their input with our categories and see if they match.

Create a Friendly, Yet Simple, Tone

Maintain a conversational tone to keep the interaction fun. Use emojis if it feels appropriate and don’t be afraid to be a little informal. A simple joke as a fail-safe can also be a great backup for potential errors, too! Just make sure that your tone stays consistent throughout the bot.

Using jokes to lead users to make a choice you preferred work as well. For instance, when we try to collect user’s emails, we gave users two options "Sure!" and “I’m not here to help” (see the picture) to make users feel guilty of selecting the second option.

However, don’t add complexity to the interaction for the sake of tone. In one of our prototypes, we asked users for their names to address them personally throughout the conversation. We tried to test whether this interaction will add more personal connection with users. However, we found that many were hesitant to provide their real names and this just added more clicks before the user could get something meaningful out of the interaction.

Find a way to end the conversation

Or maybe a loop works

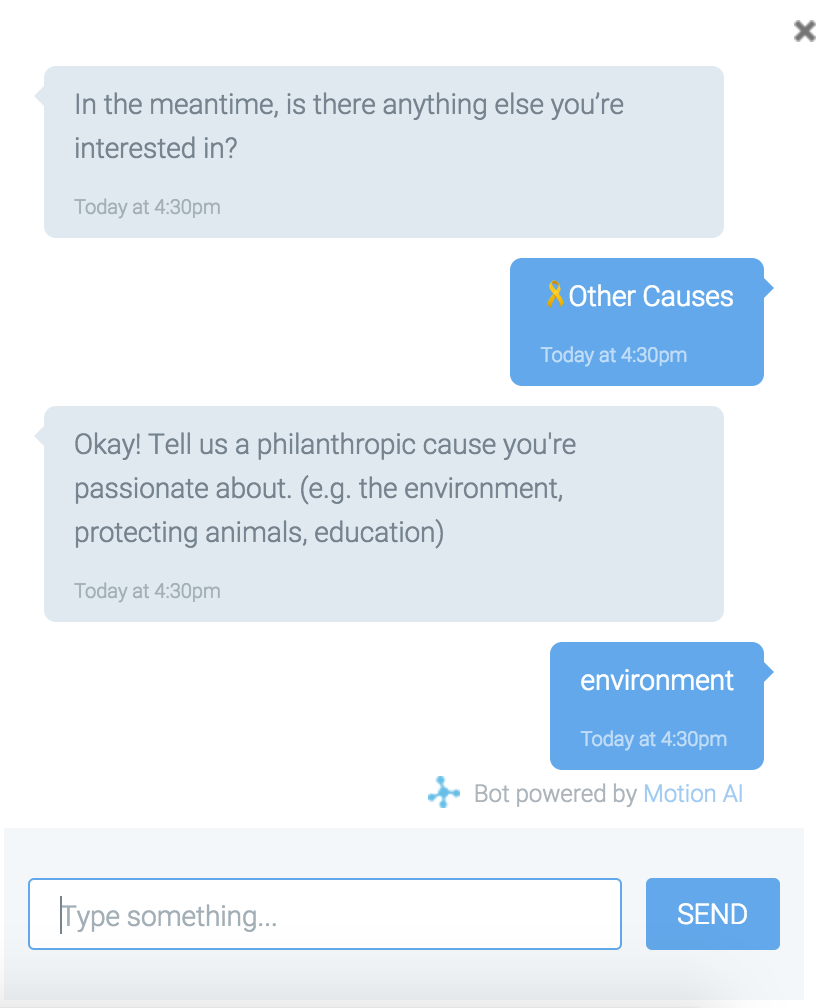

Our testing included chat experiences that allowed the user to continue or leave the conversation at different points within the interaction. The chat is intended to help PGS onboard new users, but most importantly, we needed to ensure every point in the interaction provides some form of value for end users. Adding value meant finding a balance between looping certain elements of the conversation and allowing a user to end that conversation voluntarily. For our chatbot, we specifically want to give the user the ability to explore multiple causes they care about and the matching organizations. However, once we have allowed the user to get through one loop and have collected their email and interest information, we chose to give them the option to explore additional causes or end the conversation. In our user prototype testing, several users noted that they weren’t sure where the conversation would end or how to navigate the conversation once they have the information they needed. Finding the right way to loop and end the conversation made the interaction feel more natural. The photo below shows the end of each path, and how we tried to loop the audience back to explore more options. We also provides the option "Good For Now" to end the conversation and give the audience a complete experience.

Limit the scope of the chatbot

While open text input and natural language processing allows users to have a more self-directed conversation with the bot, we also needed to ensure the direction of the conversation was clear from the beginning. A menu of options examples can help guide the user, which we found especially important in the first few interactions with the users, when they are still getting familiar with the bots functionality and purpose. Menus worked well when there are only a few choices for the user, but when open text input is preferred or there are over 3 possible responses, consider giving the users an example of an answer (either within the input cell or in the bot message). This will help ensure the conversation continues in a way that makes sense and is valuable to the user.

Additional media: manage size and experience flow

You can also display options as image cards, but be careful about how you use your limited real estate. Most chatbot tools support some additional media within the chat interface, including Motion.ai. Cards allow you to display an image and link off to a specific URL. For PGS, we used this functionality to display the search results of matching organizations, but in our testing we ran into some issues with users not being able to see the bot messages before or after the cards (see screenshot). While chatbot tools will allow you to customize the size of the cards, the key takeaway here is to work within the limitations of the chatbot screen, and ensure that users can always navigate the conversation based on what is immediately visible.

Be aware of roadblocks

Is requiring personal information worth it if some users abandon the conversation?

Understand your goal (e-mail collection, data collection, conversion) and move users toward that goal throughout the conversation. Ensure that the user has all necessary information and understands the value of the interaction before asking them to move to conversion. Not every user will be ready to give their email or convert from the very beginning, and the bot should be designed to meet multiple user needs. For example, even when we did ask for the users email, we also gave them the option to get more information, whether about PGS or the causes they care about. The end goal is the same, but the paths to get there can differ by user, and the chatbot can help guide that process. In the adjacent screenshot, you can see that we provide the option of "No thanks!" to allow the user to continue the bot interaction even if they prefer not to share their email.

Tools for Bot Building

Using diverse tools to build low and high-fidelity bot prototypes.

Paper Prototypes

Paper prototypes are wonderful initial tools when you are still thinking through your functions and user interface. Each of our team members created at least two versions of the bots and our paper prototypes brought them to life much faster than building them online would have. We explored functions such as email collection, text input, card display, image display, url, emojis and etc.

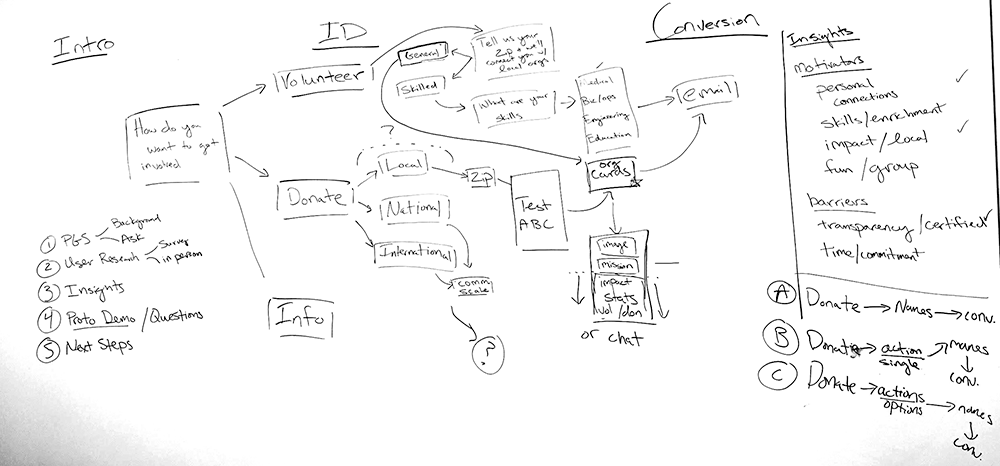

Whiteboard

On a whiteboard, it’s easy to spot dead ends, roadblocks and repetitive paths of the user flow. It helps each team member to gain a quick overview and quickly make path changes together. For example, the whiteboard helped us see that we asked users their for email twice in some flows.

User Flow for Bot Version 2

User Flow for Bot Version 3

Google doc scripts

Based on the whiteboard user flow, we wrote out the entire script of the bot. To make it easier for our developer to implement the script, we used different colors to show the different path. We also used brackets to identify variables like [[cause]], [[number]], [[source]] and [[tag]]. (Link to the entire script.)

Google excel for tags

For our prototype, PGS provided us with data about nonprofit organizations: their names, types, and descriptions, as well as branding images. We manually assigned keyword synonyms to support more possible input from our users when we ask about their interests. (See the full tag dictionary.)

Motion.AI

There are many bot prototyping tools which require zero coding knowledge. We chose Motion.AI, because it allows people with no software developing background to build a prototype easily by typing in initial response, prompt, replies and error message. It also offers options to build more advanced functions, such as geo-location, email and phone number collections, date and time selections, embedding URL, Bing search, sentiment analysis and sending email. Multiple users can collaborate to build the bot at the same time. You can build your first two bots for free. This level of fidelity allowed us to create working bots that we could update quickly as we got feedback during testing.

Beyond The Bot

Building a successful chatbot experience is less about learning the bot, but more about learning your users’ needs and motivations. We studied Yu Kai Chou’s Gamification and Behavioral Design principles to understand human motivation and applied some of the principles in the bot design.

Since our target users are college students, we conducted user interviews to gain in-depth understanding on why college students want to get involved with nonprofits. While there are technical and data management challenges, we see a case for a chatbot that match college students’ interests and professional skills with nonprofit organizations.

Volunteer Motivations

We organized in-person interviews to get more in-depth, qualitative information on students’ attitudes towards philanthropy and volunteering. We spoke with six students for about twenty minutes each. They key insights we gained from that are:

-

People give back to feel connected to others. Time and time again, students mentioned how much fun they had volunteering with their friends or how it was a great memory they could share with a community. One student said “We feel very connected to each other,” about his friends that he has volunteered with.

-

Many prefer involvement at a local level because they believe local involvement can fulfill their desire for transparency and impact more readily than national organizations. While some were drawn to specific issues that lend themselves to involvement on a global scale, most felt like their contributions were more appreciated when on a local scale. One student said, “If you donate to a local organization, you form a direct relationship you can actually go there and see.”

-

Credibility is crucial. People expect transparency about what an organization is doing and how they can help organizations. It is easier for them to trust a well-established organization that they have heard about before than an organization with a small public presence. When speaking about how she chooses to be philanthropically involved, one student said, “If I can get a lot information from their website, I know they are doing something actively.” In addition, people want to know where their money is going. When asked about what made a specific philanthropic experience a positive one, one student said “you really know where the money is going, and who are you saving.”

-

Personal connections are a common factor that sparks people to action. One of our interviewees was among the 70% of volunteers at Camp Kesem who have had a loved one battle cancer. Another student’s involvement was tied to Jewish organizations where she felt she had a personal connection. People often seek social justice involvement with a specific goal in mind and they are more motivated to get involved when they find an organization that’s a good fit with their passions. One student said, “[You have to] make sure what people are getting into, before they start volunteering. If you like education, do you like more early education or adult?”

-

Experiences must benefit the volunteers as well as those receiving help. Students we spoke to stuck with a cause when they either had fun doing so or felt that they were gaining specific skills. Whether it was learning

See full insights on students’ volunteering motivations here.