We rely on journalists to tell our stories, tell us the stories of others, and provide to us unbiased and important news. Journalism is the cornerstone for holding public officials accountable, the truth transparent, and the public informed. Journalists and newsrooms play a unique and invaluable role, and the stories they tell have an impact like no other. The public—the crowd—however, are central in helping journalists create and transform stories. The crowd’s unique knowledge and experiences, diverse and creative opinions, and sheer numbers, make the crowd an invaluable asset for journalists.

The rise of crowdsourcing, the act of using the crowd to help complete tasks, share opinion, or demonstrate expertise, has created a buzz in the tech and media world. Crowds have fundraised over two billion dollars on GoFundMe, identified thousands of images for Google’s self-driving cars, and have helped scientists map the brain with Eyewire. Journalists and newsrooms, however, have been limited in their ability to successfully engage the crowd without losing the integrity and direction of their story.

So we ask ourselves: How can journalists and newsrooms expand their crowdsourcing abilities to create successful stories that use the power and diversity of the crowd? How can journalists and newsrooms draw from real-world examples and applications of non-journalistic crowdsourcing projects as a guide for their own crowdsourcing efforts?

To answer these questions, we drew from successful crowdsourced projects, and tried to determine the metrics and methods that made them successful. We analyzed over fifty examples of journalistic and non-journalistic crowdsourcing projects, ranging from Eyewire’s brain-mapping game to Wikipedia to Lay’s “Do Us a Flavor” competition. From this analysis, we gathered five of the most critical parts of a crowdsourcing project: the original intention, the project’s crowd, the incentives utilized, the callout methods implemented, measuring the success of a project. Within these categories, we identified and defined key terms to navigate current crowdsourcing platforms and create general outlines that can be applied to a variety of crowdsourcing efforts.

Professional journalists, student journalists, and newsrooms can draw on our best practices guide to establish a platform for their project. It is by no means an all-encompassing, rule-by-rule guide; instead, it should act as a template to help journalists better understand their goals and crowd if they decide to undergo a crowdsourcing project. By utilizing this guide, we hope to make crowdsourcing more accessible and approachable for all journalists.

“Utilize the crowd, inform the crowd.”

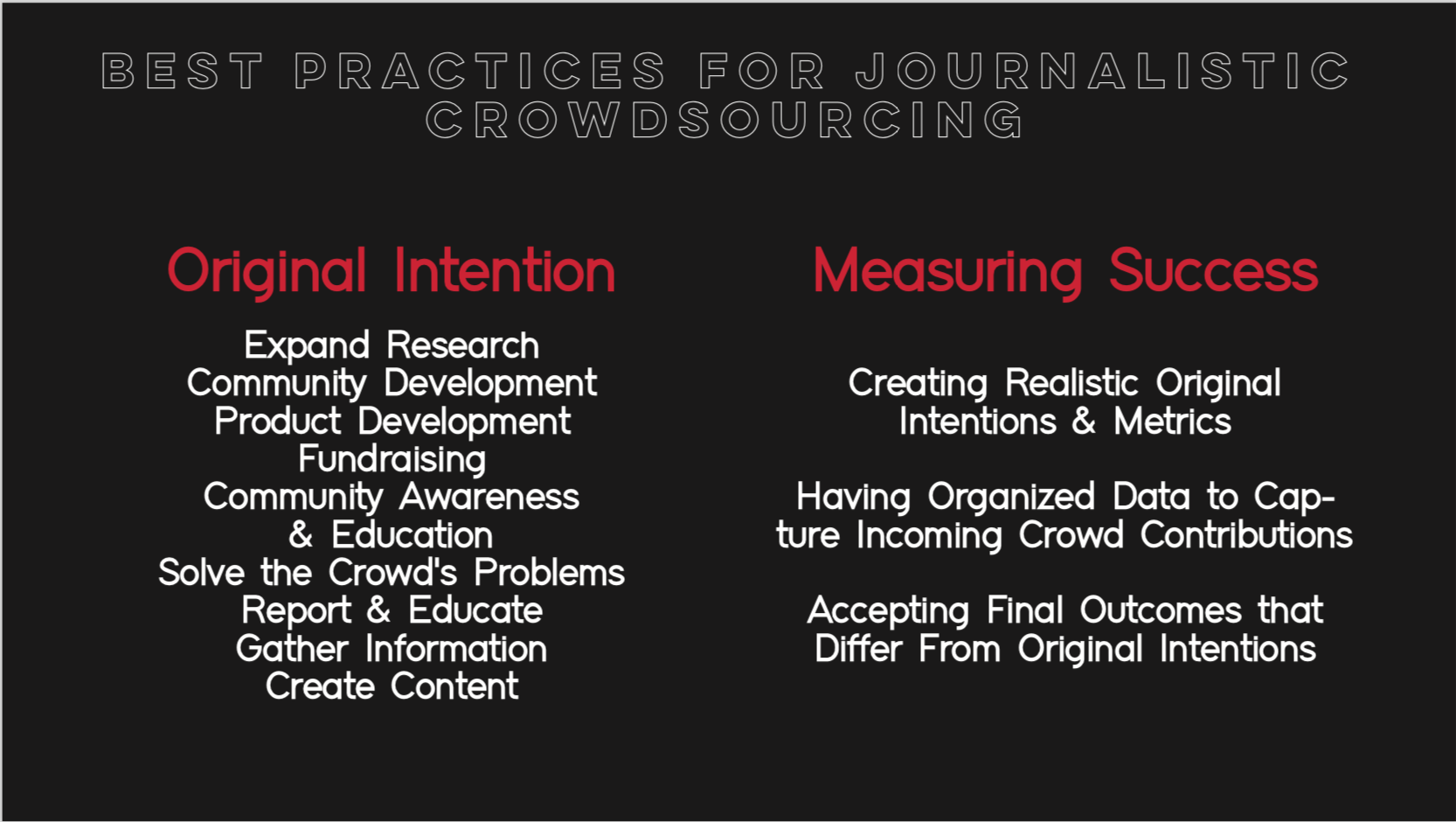

Original Intention

Crowdsourcing for journalism is the act of specifically inviting a group of people to participate in a reporting task–such as news-gathering, data collection, or analysis–through targeted, open call for input; personal experiences; documents; or other contributions. Crowdsourcing can also serve a variety of purposes, from commercial to charitable. The information gathered from a crowd can be digested in many different ways, and within our own findings, we identified nine categories that define these general crowdsourcing intentions:

-

Expand Research–To expand on an existing user base or create a new user base.

-

Community Development–To promote collective action within a community and generate solutions to common problems.

-

Product Development–To create products with new or different characteristics that offer new or additional benefits to the customer, from customer opinion,

-

Fundraising–To seek financial support through crowdfunding.

-

Community Awareness/Education–To provide information to engage and educate a target audience.

-

Solve the Crowd’s Problems–To create a solution to a defined problem through user feedback.

-

Report/Educate–To give instructions or present information to a particular field.

-

Gather Information–To collect or compile information about a given subject.

-

Create Content–To generate any media through user/audience contributions.

Through the use of the appropriate call-out method, all these forms of original intention are achievable. However, it is important to create realistic and measurable intentions. To conduct a crowdsourcing effort, you must reasonably gage your ability to reach a desired number of people from a target demographic. As further explained in the outcomes section, journalists that should expect to find some form of variation from their original intent due to outliers and the unpredictable nature of human tendencies.

It is important to note that we call this section original intentions, as crowdsourcing efforts can often lead to pivots in intent and outcome.

We highly recommend for journalists and others attempting a crowdsourcing effort to think critically about their original intent in pursuing a crowdsourcing project, as original intentions act as a guide for helping determine the appropriate crowd, incentives, and callout methods.

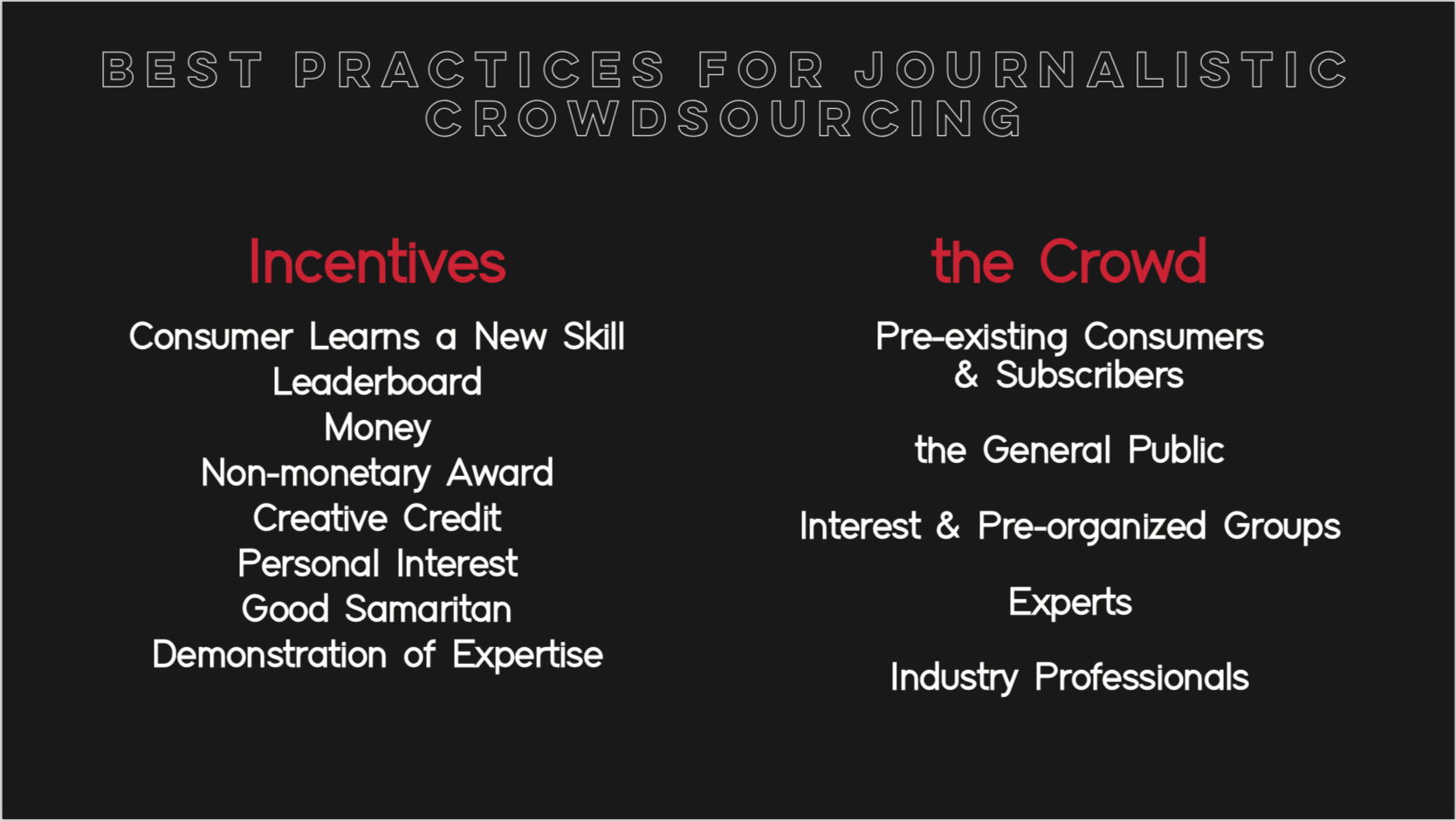

The Crowd

Defining the audience is imperative in order to identify potential solutions to the problem at hand. We were able to label five crowd subcategories from the 50 compiled crowdsourcing examples which all successfully solve a problem, whether it be identifying real-time traffic to provide the fastest communities or compiling customer testimonials to for product development and enhancement. All members of the “crowd” fall within some pre-existing group(s) by nature or by personal initiative.

The outcome of a crowdsourcing effort is heavily dependent on the desired crowd and your ability to reach that population. Typically, the more specific the request, the more targeted crowdsourcing effort. Some tasks are not dependent on a specialized demographic–the general public is commonly used in large crowdsourced projects that elicit responses in great numbers. We define the following five groups that could be targeted in a journalistic crowdsourcing effort:

-

Pre-existing Consumer Base/Subscriber Base–Previously established group of consumers that already use a specific product/consume the newsroom’s content.

-

General Public–All individuals without reference to any specific characteristic. This the broadest and largest of the crowd subgroups.

-

Interest Groups/Pre-organized Groups–A group of people with a common interest or concern; a group with a defining commonality, such as age or gender. One of the most common interest groups are generation groups. Many crowdsourcing efforts target younger individuals, such as the millennials, through specific callout and incentive methods.

-

Experts–People who have skill, specialization, or authority in some particular field that are needed for a specific crowdsourcing task.

-

Industry Professionals–People who have held an occupation and undergone special training in a particular industry.

The Tow Center, however found that smaller, more targeted audiences may provide higher quality responses compared to the responses gathered in a callout to the general public. Certain callout methods gain more traction depending on the trends and behaviors of the targeted demographic. Additionally, certain crowdsourcing incentives are valued differently by different demographics.

Incentives

When conducting any form of crowdsourcing, low response rate is one of the most common obstacles faced by journalists. People generally are not motivated to complete a task without receiving some form of compensation, whether material or immaterial.

Crowdsourcing efforts typically cannot be successfully executed without the implementation an incentive for the targeted audience. Incentives are motivating and satisfy an underlying desire of the consumer through individual or general motives.

The strategic implementation of incentives in crowdsourcing helps improve turnout and performance, and engages participants more effectively–all important components in creating a successful platform with optimal reach (Stolovich). The Tow Center’s 2015 case study on crowdsourcing defined the practice in regards to journalism, but their findings still hold true to non-journalistic efforts. While the Tow Center study was extremely comprehensive, it didn’t directly cover much on incentivization.

The Tow center briefly touches on the “element of reward,” however, our research has led us to believe there is a high correlation between incentives and high response rate (Onuoha). By analyzing a variety of strong crowdsourcing examples–not limited to journalism-centric projects–we identified eight unique forms of defined as follows:

-

Consumer Learns a New Skill/Gains Knowledge–To acquire information or improve on a skillset. For example, Duolingo offers users the opportunity to learn a new language as a payment for their translations (Furuichi and Seidel, row 2).

-

Leaderboard–To earn a ranked position within a field of competitors. For example, Eyewire, a brain-mapping game created in at MIT lab, ranked players based on their scores (Furuichi and Seidel, row 3).

-

Money–To earn monetary compensation. For example, Airbnb offers money to individuals who host their homes (Furuichi and Seidel, row 14).

-

Non-monetary Award–To earn non-monetary compensation. Yelp is a prime example of a crowdsourcing tool that does not offer money as a form of incentive. Instead, Yelpers are rewarded with yelp scores, a vast online community, and free promotions at restaurants and other check-ins (Furuichi and Seidel, row 7).

-

Creative Credit–To be credited or mentioned for an original creation. National Geographic, with its monthly user photo submission, offers submitters creative credit for their photo if it is accepted into the magazine (Furuichi and Seidel, row 16).

-

Personal Interest–To satisfy personal motives or concerns. Cicada Hunt, an app that tracks cicadas and their noses through the crowd, is used by people with personal interest in sustainability and cicadas (Furuichi and Seidel, row 22).

-

Good Samaritan–To be a charitable or helpful person without expecting compensation. For example, the If You See Something, Say Something campaign depends on anonymous tip submissions to better public safety (Furuichi and Seidel, row 18).

-

Demonstration of Expertise–To demonstrate personal skill or knowledge. Probably the most famous of crowdsourcing efforts is Wikipedia, and its ability to call on the crowd’s expertise in specific subjects (Furuichi and Seidel, row 8).

Most crowdsourcing efforts are not limited to a single incentive, but rather are interconnected. Within just the examples we examined, a handful of common pairs arose:

-

Money and Creative Credit–For example, the Lay’s “Do us a Flavor” contest annually awards one winner with a million dollars and credit for the most successful new chip flavor submission.

-

Personal Interest and Demonstration of Expertise–For example, Quora allows users to both answer user questions and ask their own questions.

The act of being recognized or receiving a shout-out for a notable achievement produces a sense of glory for most people. Glory, as well, can be a product of any of the eight incentives previously discussed that might motivate an individual to participate in a crowdsourcing effort. Many of the incentives are blanketed under a broader personal interest motive–individuals are naturally motivated to share their own experiences to attain some form of personal satisfaction.

Regardless of the demographic you plan to reach, be transparent and explain to your audience exactly what they will receive in return for their contribution. An incentive is not required, as some individuals are driven completely out of the ‘good samaritan’ motive. Our findings, however, showed that the best results came from a platform with some form of incentive.

Callout Methods

All crowdsourcing efforts begin with a callout to engage the target audience. All journalistic crowdsourcing efforts will take one of the two following forms: an unstructured or structured callout. Unstructured callouts, as the name suggests, are open appeals to the public to contribute votes, calls, or any other material they wish to submit to a news organization/journalist. Structured callouts, however, are specific outreaches by a news organization to have their target audience complete a defined task. Most submitted tasks from structured callouts are stored in databases.

According to the Tow Center, journalistic crowdsourcing requires a specific callout, encouraging their volunteers to feel like real news contributors. However, journalistic crowdsourcing has faced many limitations, and to overcome these limitations, journalistic crowdsourcing should also embrace the classical unstructured callout method, to not only garner greater participation, but to also expose new call to action methods of crowdsourcing to journalistic crowdsourcing.

The Tow Center describes six different forms of structured callout methods: voting, witnessing, sharing personal experiences, tapping specialized expertise, completing a task, or engaging audiences. In order to reach their crowd, journalists must use varying technologies and approaches. We have outlined the following seven technologies and approaches journalists should take when attempting a journalistic crowdsourcing effort:

-

Website–Eighty-six percent of Americans have internet access, so websites should be used as a callout method when wanting to target large numbers of the population (Anderson). Using a website for a callout can take two forms: the first can be using a newsroom’s personal website to advertise their call to action. The second can be using a newsroom’s social media presence on websites, such as on Twitter or Facebook, to publicize their call to action. If journalists want to engage the entire general public or pre-existing consumer base, we suggest this method combined with applications to gather the greatest participation.

-

Application–Similar to a website, application callout usage can take two forms. First, using a newsroom’s app to advertise their call to action. Second, using a newsroom’s social media presence on applications such as Facebook or Twitter to publicize their call to action. This should be used when wanting to reach a pre-existing consumer base, as visitors to either a domain application or social media application will be viewed by followers that already exist. Appealing to pre-existing consumer bases generally garners more experts.

-

Television–This is the most open and accessible approach to publicizing one’s crowdsourcing efforts. Ninety-seven percent of American households have televisions, so this is the suggested method when the journalists want contributions from the general public (Nielsen). This is not the suggested method for attracting experts or other interest groups.

-

Radio–Ninety percent of Americans above the age of twelve listen to the radio every week (Center for American Progress). Using radio advertising as means of publicizing a crowdsourcing effort is suggested for efforts that aim to mobilize members of the general public. This is not suggested for targeting younger interest groups.

-

Email–Using email as a call to action is best when one wants to attract a pre-existing consumer base, such as a listserv. Email targets active consumers of a newsroom’s content, so this is a strongly suggested method for designing a crowdsourcing effort looking to gain input from experts, followers, or people with personal interest in a subject matter.

-

Mail–Mail is, in general, not a recommended means of contacting volunteers for crowdsourcing. Because mail demands a greater investment from volunteers, such as mailing back, it is not advised to use it as a means of communicating a crowdsourcing endeavour.

-

Word of Mouth–Using word of mouth as a method of gaining crowdsourcing volunteers and responses is not recommended for large-scale crowdsourcing efforts. This can be best implemented by college journalists or college newsroom publications, as word of mouth travels more effectively in smaller, more densely packed populations.

Choosing the correct means of reaching a crowdsourcing audience is vital to the success and outcomes of a crowdsourcing effort. As seen, many of these methods are dependent on the identity of the crowd. As a result, we recommend journalists think critically about who their crowd is before attempting to publicize their crowdsourcing effort. It is also important to note that many of these technologies and approaches can and should be used together.

Measuring Success

To determine the success of one’s crowdsourcing efforts, journalists must establish tangible outcomes and methods to measuring their success. In order to accomplish this, we suggest the following:

-

Creating realistic original intentions and metrics. As mentioned in the original intentions section, we suggest journalists undergoing crowdsourcing efforts to not only clearly enact a set of intentions they hope to achieve through their project. This means having a strong understanding of one’s reach in the different call-out methods; asking yourself, how many people will receive my message based on the channels I use to reach out to my established crowd? Having a clear understanding of the number of people that will be reached helps give a quantitative understanding to crowdsourcing success.

-

Having organized databases/data tables to capture incoming crowd contributions. This helps make sure that all data will be correctly captured, stored, and analyzed. In addition, this allows to have a clear understanding of final numbers, which will be compared to overall reach to determine a percentage of success.

-

Accepting final outcomes that differ from original intentions. Many crowdsourcing efforts can deviate from their original intention, and produce results that are still meaningful. This also means being okay with failure. Despite following all appropriate steps in a crowdsourcing effort, this does not guarantee success, as all crowdsourcing efforts are very dependent on humans, who are incredibly variable. In addition, not all success is just a participation metric; contributions must also be analyzed depending on the original intention to determine success.

By setting clear and tangible goals, formulated methods to organize and compile data, and understanding original intentions may not always correspond to actual outcomes, journalists can both quantitatively and qualitatively measure their achievement.

It is also important to note that by establishing metrics and clear goals for a journalistic crowdsourcing project, it helps a newsroom organization create multiple “templates” for different crowdsourcing efforts. Ultimately, it creates a guideline future crowdsourcing attempts can follow and modify to their needs.

Takeaways

From our analysis, we suggest the following to journalists who want to make a crowdsourcing effort:

-

Clearly identify your goals for doing a crowdsourcing method. Establish your original intentions in a clear and concise way, so your project does not lose sight of your original goals.

-

Determine which type of crowd best fits your crowdsourcing effort. Journalists should ask themselves: do I want a crowd of experts? Of beginners? Of anyone with interest? Having a targeted crowd helps determine which incentives and callout methods are implemented during a crowdsourcing effort.

-

Incentives are necessary component to any crowdsourcing project in mobilizing and generating work from a crowd. Creating incentives that best fit the crowd will create greater traction and help form a successful crowdsourcing effort.

-

Selecting the correct callout method for reaching your crowd is vital to the success and final outcomes of a crowdsourcing project. Choosing the appropriate callout method is incredibly dependent on the targeted crowd, so have a strong understanding of your crowd before selecting one of the seven callout methods.

-

Measure the success of a crowdsourcing effort to establish what you did well and what could be improved in your next project. Determining these measures can be important in improving your crowdsourcing abilities. To measure the success of your project, have clear and tangible original intentions, organize and clean your data, and be willing to accept your final outcomes.

Over the 10-week span of our research and trials, these takeaways ultimately resonated most with us. Our findings, however, are not a one-size-fits-all solution to every journalistic crowdsourcing effort. Every project and crowd is unique and may warrant a variety of variation on a case-by-case basis, but we hope you can use this as a guide to establish measurable goals and achieve successful outcomes.