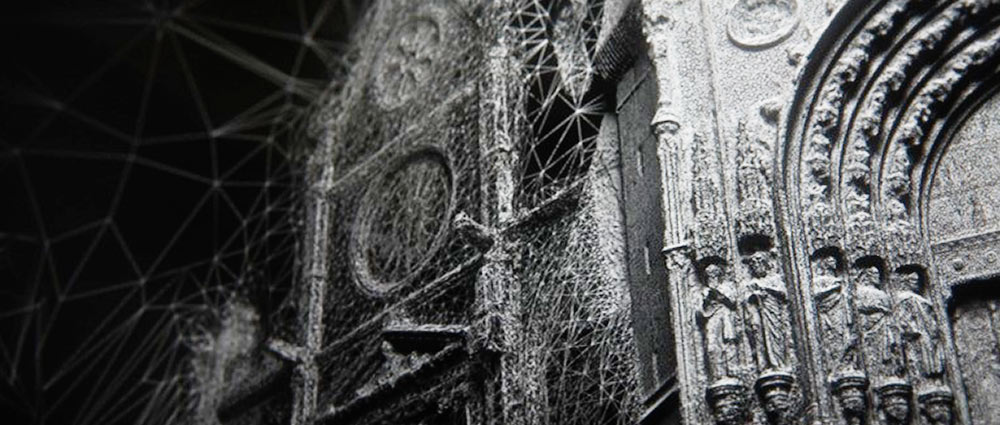

While employing photogrammetry as our main tactic for creating 3D reconstructions of history, we faced the same limitations that previous groups have experienced the subjects of these models have to be still, and the video must capture a sufficient amount of information about the depth and dimensions of an object in order to work. However, we also faced the added challenge of using found footage instead of footage that we took ourselves.

Photogrammetry Video Requirements and Limitations

We quickly realized that we could only use certain videos that fit a set of specific criteria:

- Relatively high video quality (1080p and colorized preferred)

- Consistent background and lighting

- Still subjects or subjects displaying minimal movement.

- Background movement is permissible, but the main subject has to be clearly identifiable.

- Videos must capture at least a 45 degree view of an object or show at least two sides of a subject.

- Video clips must be able to produce at least 20 recognizable photo frames.

Initially, we tried to use a variety of historic footage available to us. We discovered that a lot of historic footage is often to grainy for software to recognize, and traditional black and white footage or inconsistent lighting also make it difficult for software to identify subjects.

We then tried combining pictures of a single object all taken from separate sources. We gathered about 20 pictures of the Parthenon in Athens, all taken from different angles. However, because of the varied lighting and positioning of the pictures, the photogrammetry software was also unable to identify enough points to create a model.

The need for high quality, consistent photo inputs limits us to fairly recent historical footage. However, we did discover an exception: footage from the documentary World War II in Color seems to model fairly well, so it appears that colorizing historic footage might be a way to increase the quality of old footage, making it feasible to model earlier historic moments.

While it is preferable that the found footage also captures the object circling the object, it also seems that we can reconstruct certain buildings and limited views of streets when the camera pans straight down the street, as long as a sufficient amount of depth information is provided.

Methods and Software

Once we found our videos, we would export these videos into 20-40 separate .png picture files using Adobe Photoshop. This was done by exporting the videos into separate layers and taking usually every 5th frame in the video.

We used PhotoScan and RealityCapture, which are both photogrammetry software. After running trials using both software, we received mixed results. While PhotoScan did capture certain models better, ultimately RealityCapture usually produces a cleaner and more reliable model than PhotoScan. Photoscan was not able to recognize a subject in the case of the Serena Williams video, while RealityCapture produced a pretty accurate model for the frame of reference it was given. However, it does seem that PhotoScan is better able to capture and model background activity if it does sense any points.

As for presentation methods, we uploaded our models on Sketchfab, but in doing so, we lost a lot of the model’s accuracy and quality. RealityCapture also creates videos of the model, which would allow us to display the model in full quality, but we lose the interactive aspect of 3D modeling.

Further Questions

We also briefly entertained with the possibility of modeling subjects that move rotationally in a video. Theoretically, this would provide enough depth information about the subject to allow us to create a partial 3D model. However, the software cannot identify the subject if the subject dynamic. Therefore, we would need to track the moving object and mask the rest of the background in order for the subject to be modelable.

Tracking and masking each video would of course make the current process a lot more tedious and time consuming, but we believe that it would be a worthwhile pursuit for the future because history’s most interesting moments often involve moving pieces.

Additionally, many of the pieces of footage that we found featured busy backgrounds, which often interfered with the quality of the model - we experimented with masking the backgrounds of these videos, and leaving the subject model, which returned bad results. We also think this could be an area to explore down the line.